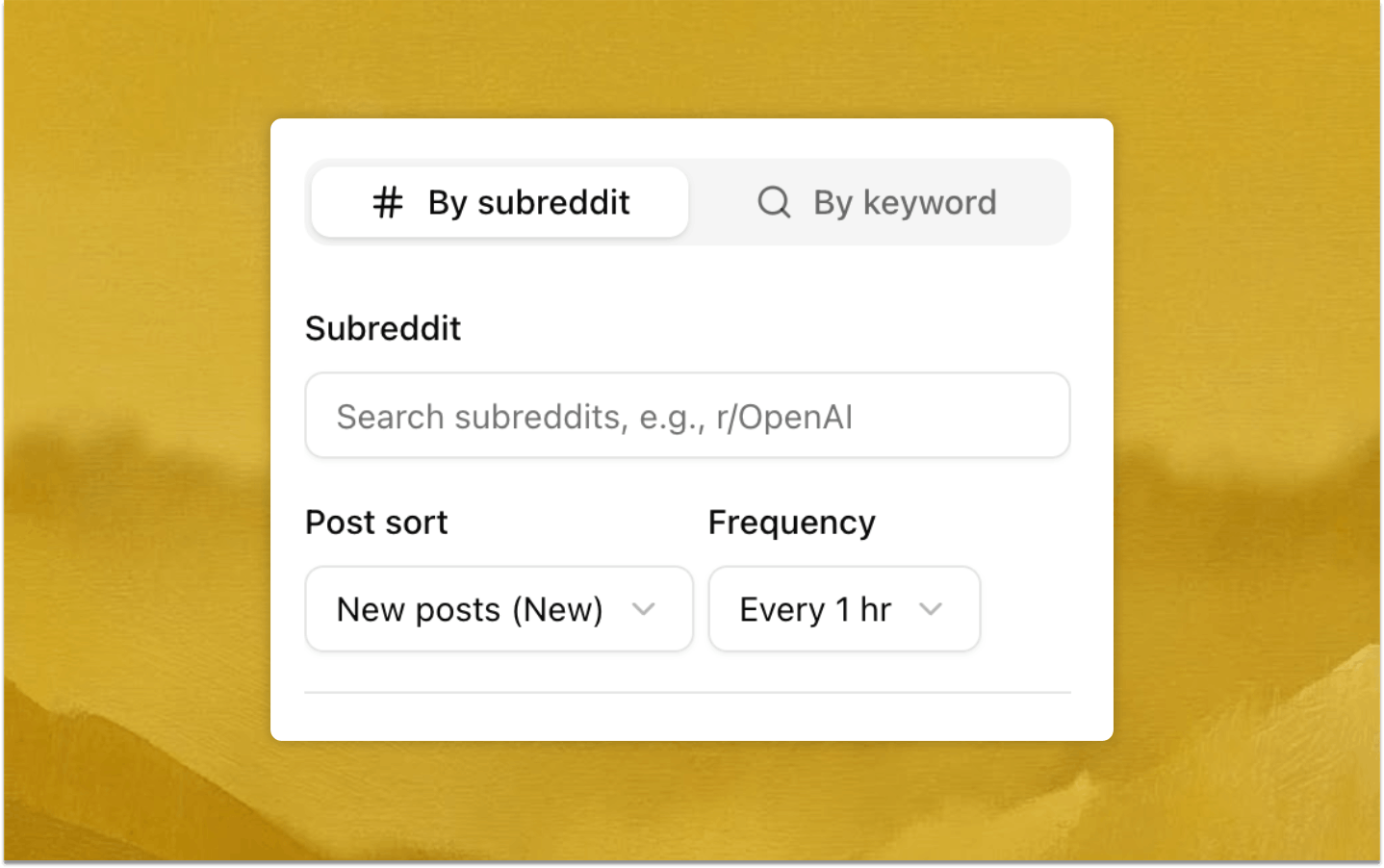

Dual-mode recurring capture for broader high-signal coverage

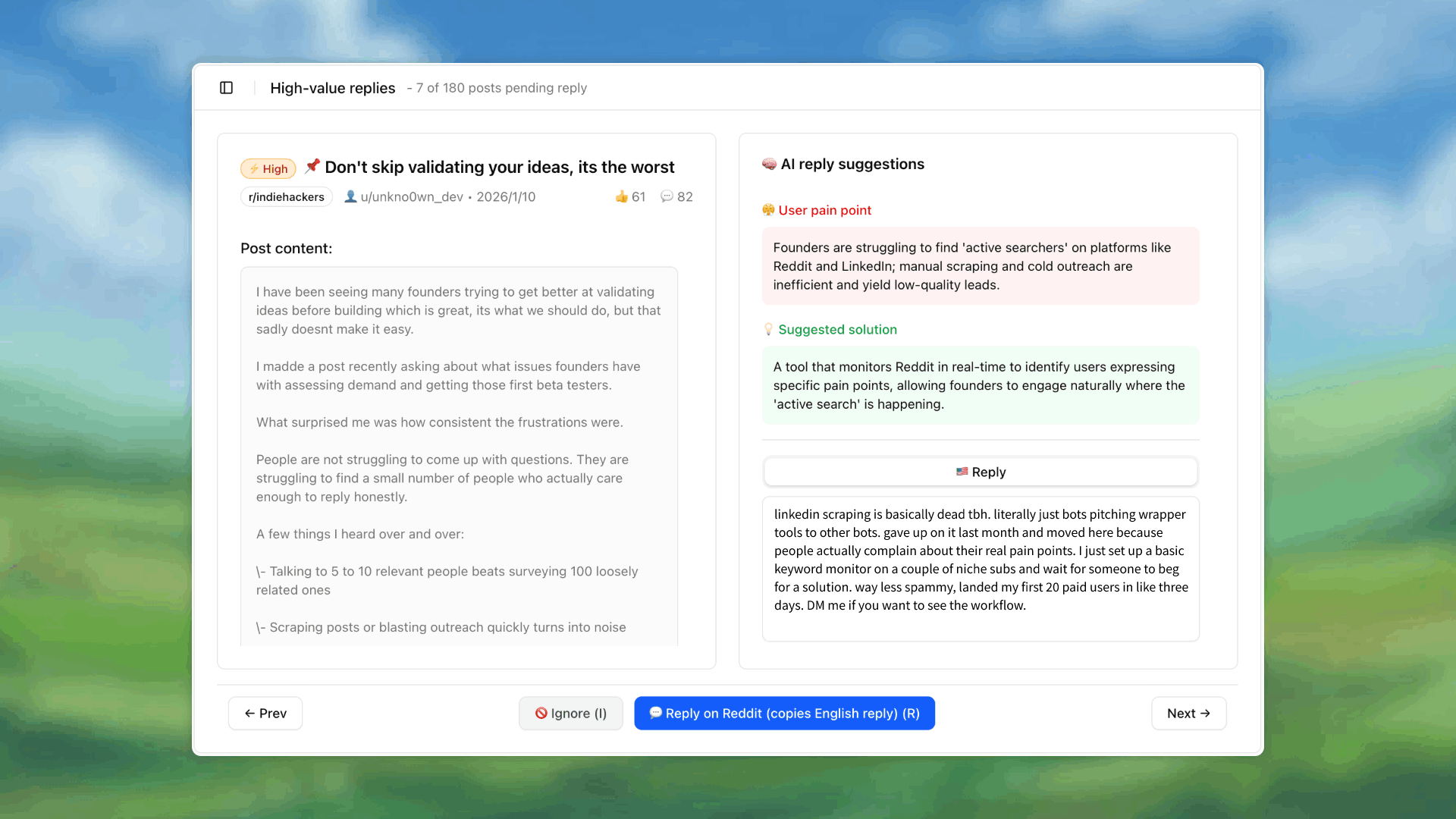

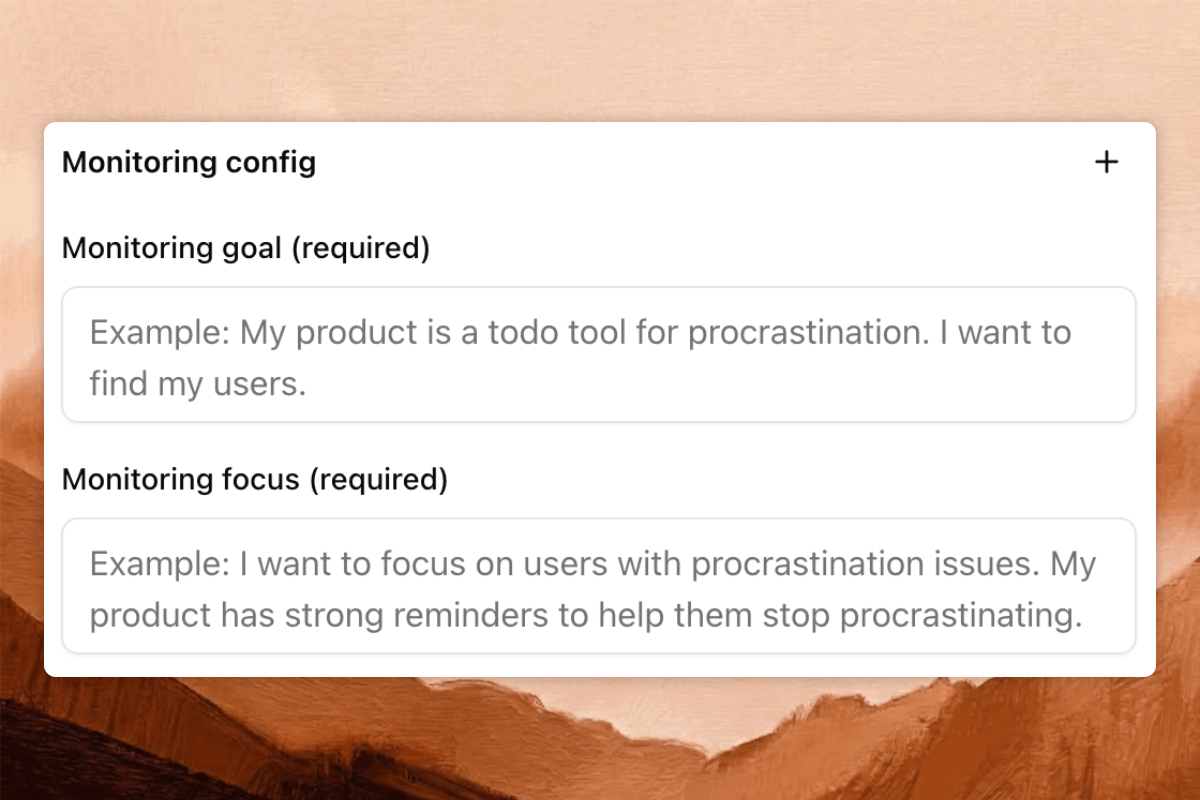

Run Subreddit and keyword monitoring in parallel on a fixed cadence so new threads are continuously captured with traceable history.

- • Run multiple monitoring plans in parallel across business goals.

- • Tune sort and time windows for both rapid response and trend tracking.

- • Replace memory-driven browsing with systemized recurring tasks.